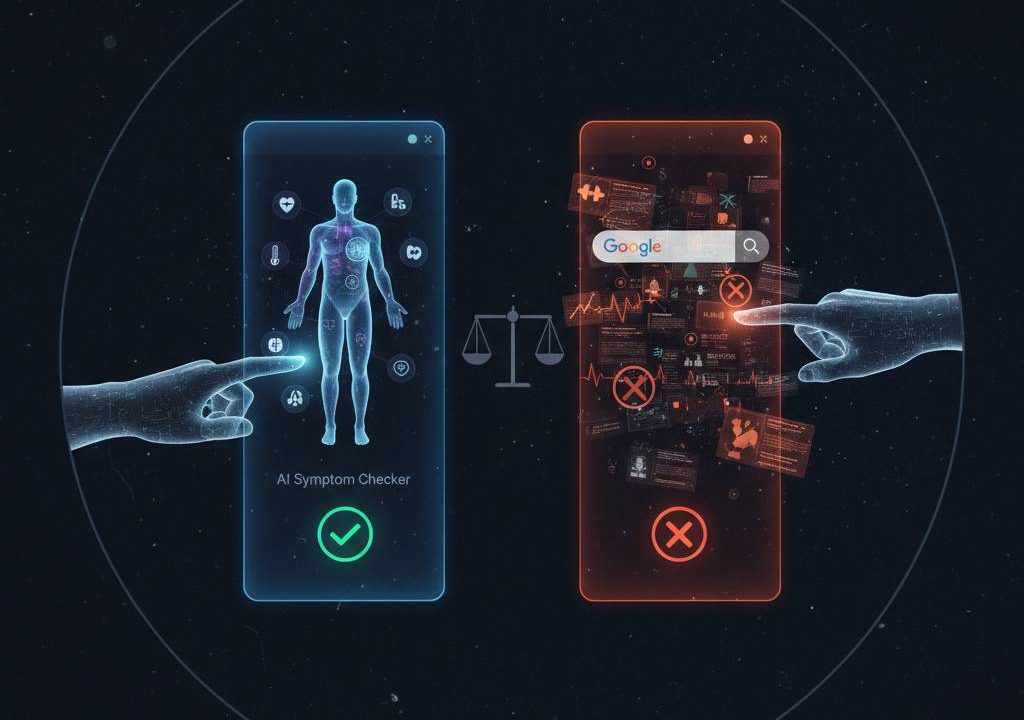

AI-powered symptom checkers offer structured triage guidance outperforming Google’s unstructured results, but both carry risks of misdiagnosis—studies show 36-70% diagnostic accuracy versus 80%+ triage safety. Understanding limitations ensures safer health decisions without replacing professional care.

How AI-Powered Symptom Checkers Work

These apps use decision trees, ML models trained on millions of cases to match symptoms against 800+ conditions, prioritizing urgency over exact diagnosis.

Diagnostic Algorithms and Triage

Users input age, symptoms, location; AI asks follow-ups, outputs triage: self-care, GP visit, ER. Triage accuracy beats diagnosis—crucial for safety.

Leading Tools Performance

Ada, Healthily, Symptoma lead: Symptoma hit 96% COVID accuracy vs PCR; Healthily matched RCGP 62% with 3.7% very unsafe triage.

Imperial’s study critiques vignette benchmarking.

Google Symptom Search Limitations

“Chest pain” yields 10M results mixing WebMD, forums, ads—SEO-optimized content dominates, not evidence.

SEO-Driven Results Bias

Top results favor sponsored clinics, supplement sellers over peer-reviewed sources. No symptom integration; users self-diagnose from snippets.

Misinformation Prevalence

Forums amplify anecdotes; rare diseases buried. No triage—panic from scary headlines or false reassurance from wellness blogs.

Safety Comparison: AI Checkers vs Google

AI tools systematically safer for urgency assessment, per head-to-head studies.

Accuracy and Triage Metrics

| Metric | AI Checkers | Google Search |

|---|---|---|

| Top-1 Diagnosis | 34-62% | N/A (no diagnosis) |

| Top-3 Diagnosis | 50-70% | N/A |

| Triage Accuracy | 80-92% | User-dependent (0-100%) |

| Unsafe Triage | 3-28% | High (scare tactics) |

| Sensitivity (urgent) | 92-100% | Variable |

Harvard/BMJ: AI triage matches physicians 80%; Google lacks equivalent.

Risk of Harm Analysis

AI errs conservatively (over-referral safer); Google fuels self-treatment of serious issues or ER avoidance. Real patients needed beyond vignettes.

CollectedMed analyzes AI accuracy pros/limits.

Clinical Evidence on AI-Powered Symptom Checkers

Research evolves but inconsistent; 2026 SCARF framework standardizes reporting.

BMJ and Lancet Studies

2015 BMJ (top-3: 34%, triage 80%); 2022 Lancet: 30-70% range by condition/tool. Common ailments excel; rare/complex falter.

Real-World Validation Gaps

Vignettes mislead—real patients omit details, show bias. AI misses nonverbal cues, physical exams. Legal risks: misdiagnosis liability.

JMIR Human Factors proposes SCARF framework.

Key Findings:

- Physicians > AI for top diagnosis (BMJ Open).

- Symptoma predicts outbreaks via query patterns.

- LabTest Checker: 74% accuracy, 100% emergency sensitivity.

Best Practices for Safe Usage

Treat both as starting points, not substitutes.

When to Trust AI Checkers

- Common symptoms (flu, UTI): Good triage.

- Follow advice: GP for yellow/orange, ER for red.

- Cross-check 2+ tools; note confidence scores.

Red Flags Requiring ER

- Chest pain, sudden weakness, breathing difficulty.

- AI says self-care but symptoms worsen.

- Children/elderly/pregnant: Always professional.

Ada review for UK GPs 2026.

Usage Protocol:

- Input detailed symptoms, update with progression.

- Screenshot results for doctor.

- Avoid if chronic/mental health—specialized care needed.

- Verify sources cited by tool.

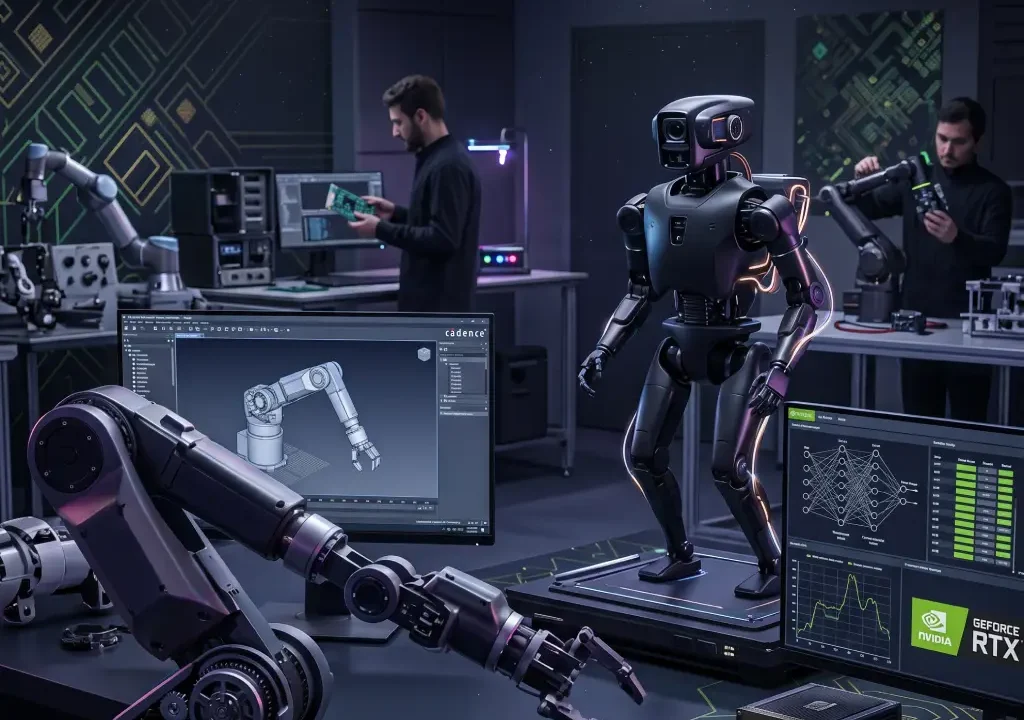

Future of AI-Powered Symptom Checkers

2026+ integration with EHRs, wearables boosts accuracy; FDA oversight looms. Symptoma-style outbreak prediction expands utility.

PMC warns legal challenges.

AI-powered symptom checkers edge Google in structured safety but demand cautious use—triage yes, diagnosis no. With 80% urgency accuracy and improving ML, they guide wisely when paired with clinical judgment. Skip Google rabbit holes; choose verified AI, then see your doctor